“Well, it’s all website-driven, and people don’t really care about domain names these days; it’s all subdomains on sites like Vercel.” Shut up. People love their ego domains.

Based

AI has been good at auto-completing things for me, but it almost always suggests things I already knew without even web searching. If I try to get advice about things I know nothing about (code wise) it’s a really bad teacher, skips steps, and makes suggestions that don’t work at all.

I’m guessing there’s been no software explosion because AI is really only good for the “last 20%” of effort and can’t really breach 51% where it’s doing the majority of the driving.

Apropos to use the term “driving” I feel. Autonomous vehicles have largely been successful because the goal is clear (i.e. “take me to the grocery store”) and there’s a finite number of paths to reach the goal (no off-roading allowed). In programming, even if the goal is crystal clear, there really are an infinite number of solutions. If the driver (i.e. developer) doesn’t have a clear path and vision for the solution then AI will only add noise and throw you off track.

Also it’s just wrong a lot when I ask things I don’t know.

“Use

private_keyparameter instead ofpkey.”

Alright, cool, good tip.

“Unknown parameterprivate_key.”

*jim face*

The best use I’ve found for “AI” in coding is making/updating readme files. If it can save me 30 min of tech debt I don’t have time for anyway that’s one positive use case.

I’ve given it old and new code (along with the existing readme if it exists) then said provide markdown for an updated readme with a changelog. Using that as a jumping point I’d proof read it and add a few sentences or delete one or two things.

Who’d’ve thunk large language models would be good at language and not coding.

(I know, programming has languages too but there is far less available data on those languages used “talking” to each other.)

I’m learning coding right now in college and my professors are making sure we know how to effectively work with LLMs when coding.

It should never be used for generating code for anything other than examples. One should always remember it will answer what is asked and nothing more, and must make considerations for this when adapting generated code for your uses. Its best to treat it like a frontend for parsing documentation and translating it into simpler language, or translating a function in one language to another.

People are spending all this time trying to get good at prompting and feeling bad because they’re failing.

This whole thing is bullshit.

So if you’re a developer feeling pressured to adopt these tools — by your manager, your peers, or the general industry hysteria — trust your gut. If these tools feel clunky, if they’re slowing you down, if you’re confused how other people can be so productive, you’re not broken. The data backs up what you’re experiencing. You’re not falling behind by sticking with what you know works.

AI is not the first technology to do this to people. I’ve been a software engineer for nearing 20 years now, and I’ve seen this happen with other technologies. People convinced it’s making them super productive, others not getting the same gains and internalizing it, thinking they’re to blame rather than the software. The Java ecosystem has been full of shitty technologies like that for most of the time Java has existed. Spring is probably one of the most harmful examples.

I have no doubt it 10xs developers who could produce 0 code without it

10×s developers who could produce 0 code without it

Let me see; ten times nothin’, add nothin’, carry the nothin’…

I think it’s both true that you can’t really write an entire app with just AI… At least not easily.

But also I don’t buy that AI doesn’t make me more productive. I’m not allowed to use it on my actual code but I have used it several times to generate one-off scripts and visualisations and for those it can easily save hours. They aren’t software I need to edit myself though.

Pretty much everyone I’ve talked to about this says the same thing. LLMs are useful for one-off scripts or quickly generating boilerplate. It just turns out that those tasks don’t make up the majority of programming work unless you are in a bullshit job anyway.

We aren’t yet great at knowing when LLM will save time and when it will inflate time.

That’s a great read. I’ve used AI a few times, and I’ve never used any of the code it’s spat out. The only thing it’s helped me with was telling me how to solve a bug when I knew what the problem was, but didn’t know the right RFC the solution was in (RFC 2047, about encoding non ASCII text in email headers, if you’re interested).

One big issue I have with AI is how utterly reliant on RegEx it is. Everything can be solved with a RegEx! Even if it’s a terrible solution with horrendous performance implications, just throw more RegEx at it! Need to parse HTML? Guess what! RegEx!

I’ve been using the AI to help me with some beginner level rust compilation checks recently.

I never once got an accurate solution, but half the time it gave me a decent enough keyword to google or broken pattern to fix myself. The other half of the time it kept giving me back my own code proudly telling me it fixed it.

Don’t worry though, AGI is right around the corner. Just one more trillion dollars bro. One trillion and we’ll provide untold value to the shareholders bro. Trust me bro.

I dont get to decide what i code at work. My manager and product owners decide based on business needs. Most of the time its fixes and adding or removing functions as competing interests change priorities. But its really good at documenting old code while everyone figures exactly what they want. This author is in the 80s asking why every company doesnt immediately have a website now that the internet is available.

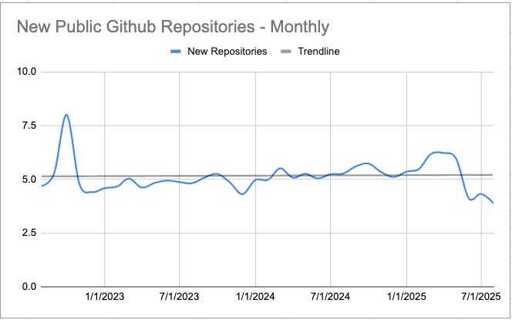

Your response seems very enterprise-focused. I think you might be missing the kind of software development that happens before it becomes enterprise. All of these metrics are very reasonable for new products, startups, consulting, and hobby hackers. If code were moving 10X now, we should reasonably see 10X new growth. These numbers show we’re not.

Arguably we should also see a 10X something in legacy and enterprise as well which is harder to measure. If we assume a 10X dev is producing 10X more code, we should expect 10X more bugs so we should also see a rise in QA positions. We’re not, so that’s a good indicator. We should also see a rise in product manager roles to handle teams that are suddenly producing 10X per member. We’re not, so that’s a good indicator. We should also see 10X new product deliveries from companies like Salesforce. We’re not, so that’s a good indicator.

You completely missed the sections on how long these tools have been available. Your point about the internet would be valid if this article was written in, say, 2021 when Copilot and Tabnine were new and hot. It would also have maybe been valid in early 2023 when people were first spinning up workflows off ChatGPT and making 10X promises. It’s now years later and we’re not seeing any growth in any of those numbers as illustrated by the article.