There is a fundamental difference this post is pretending doesn’t exist.

Trusting the abstractions of compilers and fundamental widely used libraries is not a problem because they are deterministic and battle tested.

LLMs do not add a layer of abstraction. They produce code at the existing layer of abstraction.

they’re also not equivalent because LLMs pretense is to remove the human from the equation, essentially saying that we don’t need that knowledge anymore. But people still do have that knowledge. Those telephone systems still work because someone knows how each part works. That will never be true for an LLM.

Compiling code to machine instructions is deterministic. That’s not the case with LLMs.

abstracting away determinism /s

True, but my compiler isn’t demanding a $200/month subscription from me.

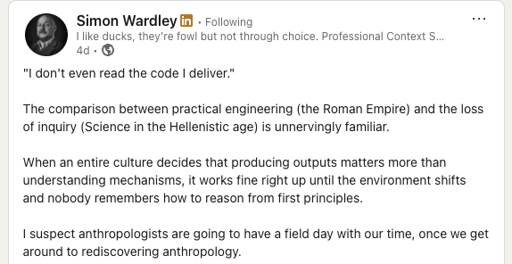

Roman builders were masters of concrete. That was how they built enormous structures like the Colosseum. They even had a special type of concrete for harbors, that would form a chemical reaction with sea water, and create an underwater concrete so hard that it’s still in use.

Then Rome slowly collapsed, and the secrets of concrete were lost, and weren’t rediscovered until centuries later. Scientists just figured out the thing with the sea water concrete a few years ago, but they still don’t know the formula.

History is riddled with lost and rediscovered stuff.

As a senior developer, I use the new AIs. They’re absolutely amazing and a huge timesaver if you use them well. As with any powerful tool, it’s possible to over-use and under-use it, and not achieve those gains.

However, I disagree with the comparison to knowing how hardware works. There’s a pretty big difference between these 2 things:

Letting a company else design and maintain the hardware or a library and not understanding the internals yourself.

Letting a someone/something design and implement a core part of your code that you are responsible for maintaining, and not understanding how it works yourself.

I am not responsible for maintaining ReactJS or my Intel CPU. Not understanding it means there might be some performance lost.

I am responsible for the product my company produces. All of our code needs to be understood in-house. You can outsource creation of it, or have an LLM do it, but the company needs to understand it internally.

If only it was just a problem of understanding.

The thing is: Programming isn’t primarily about engineering. It’s primarily about communication.

That’s what allows developers to deliver working software without understanding how a compiler works: they can express ideas in terms of other ideas that already have a well-defined relationship to those technical components that they don’t understand.

That’s also why generative AI may end up setting us back a couple of decades. If you’re using AI to read and write the code, there is very little communicative intent making it through to the other developers. And it’s a self-reinforcing problem. There’s no use reading AI-generated code to try to understand the author’s mental model, because very little of their mental model makes it through to the code.

This is not a technical problem. This is a communication problem. And programmers in general don’t seem to be aware of that, which is why it’s going to metastasize so viciously.