cross-posted from: https://lemmy.ca/post/61948688

Excerpt:

“Even within the coding, it’s not working well,” said Smiley. “I’ll give you an example. Code can look right and pass the unit tests and still be wrong. The way you measure that is typically in benchmark tests. So a lot of these companies haven’t engaged in a proper feedback loop to see what the impact of AI coding is on the outcomes they care about. Lines of code, number of [pull requests], these are liabilities. These are not measures of engineering excellence.”

Measures of engineering excellence, said Smiley, include metrics like deployment frequency, lead time to production, change failure rate, mean time to restore, and incident severity. And we need a new set of metrics, he insists, to measure how AI affects engineering performance.

“We don’t know what those are yet,” he said.

One metric that might be helpful, he said, is measuring tokens burned to get to an approved pull request – a formally accepted change in software. That’s the kind of thing that needs to be assessed to determine whether AI helps an organization’s engineering practice.

To underscore the consequences of not having that kind of data, Smiley pointed to a recent attempt to rewrite SQLite in Rust using AI.

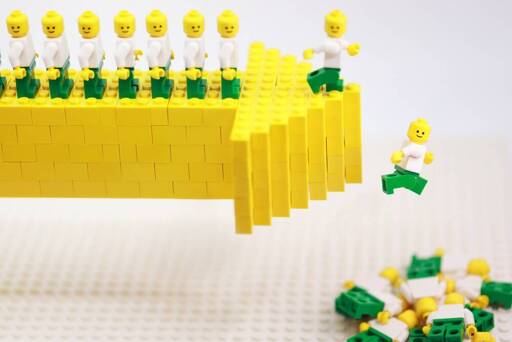

“It passed all the unit tests, the shape of the code looks right,” he said. It’s 3.7x more lines of code that performs 2,000 times worse than the actual SQLite. Two thousand times worse for a database is a non-viable product. It’s a dumpster fire. Throw it away. All that money you spent on it is worthless."

All the optimism about using AI for coding, Smiley argues, comes from measuring the wrong things.

“Coding works if you measure lines of code and pull requests,” he said. “Coding does not work if you measure quality and team performance. There’s no evidence to suggest that that’s moving in a positive direction.”

“The other way to look at this is like there’s no free lunch here,” said Smiley. "We know what the limitations of the model are. It’s hard to teach them new facts. It’s hard to reliably retrieve facts. The forward pass through the neural nets is non-deterministic, especially when you have reasoning models that engage an internal monologue to increase the efficiency of next token prediction, meaning you’re going to get a different answer every time, right? That monologue is going to be different.

“And they have no inductive reasoning capabilities. A model cannot check its own work. It doesn’t know if the answer it gave you is right. Those are foundational problems no one has solved in LLM technology. And you want to tell me that’s not going to manifest in code quality problems? Of course it’s going to manifest.”

This is from an AI consultant, btw.

And yet governments everywhere insist on more “AI investment”

Fear of missing out.

And heavy lobbying too, I imagine.

Vaguely based words from an AI consultant? Are these the end times?

Great article from a grounded professional. As usual for The Reg there’s some great comments on the article including this one responding to a user mentioning that AI image generation seems useful but flawed :

“that wouldn’t be possible without someone with some talent” Exactly - and that person with talent isn’t getting paid. A very astute person has said that the purpose of AI is to give wealthy people access to skill without giving skilled people access to wealth.

the purpose of AI is to give wealthy people access to skill without giving skilled people access to wealth.

Perfectly said.

My boss: more lines of code is more better! Look how many more lines of code AI wrote!

I don’t code but I know enough about it to know your boss is an idiot.

ChatGPT said he was super smart though…

The one time one of my managers suggested measuring lines of code to determine performance programming, I very vocally offered to make a “loop unroller” (something that transforms “for” loops into just the content block repeated as many times as the loop loops) for the rest of the team.

My favourite one at my place, labelling things as “AI Search✨” when I know, cause I was one of the ones coding it, that it’s just a plain-old no-AI algorithm, and we just lied so we can say we have AI search

Organizations using this without having someone that knows what they’re looking at reading the code are pretending that they have good processes to evaluate code quality, performance, and security which basically no company does.

Proving that code that looks like it works is wrong, slow, or dangerous is more difficult than writing it.

It’s more difficult to automate the evaluation of software than it is to hire competent people to evaluate and write software and be responsible for it.

So congratulations, corporate boardroom AI addicts you’ve turned a difficult to solve problem into a nearly impossible one.